MTI Journal

MTI Journal.10

Engine Data Analysis by Utilizing

AI and Machine Learning

Putu Hangga Nan Prayoga

Researcher, Maritime and Logistics Technology Group*

*The job title is as of February 12, 2020

Before joining MTI in April 2019, I had been involved in a joint research with MTI and Kyushu University, where I worked as a specially appointed assistant professor in the field of system design and was engaged in many research activities. I also have experience of working at a ship management company and at a ship owner prior to taking postgraduate degree. Now at MTI, I have been involved in various research projects, which are mainly related to the use of big data. Among them, I have especially worked hard for the utilization of ship engine data collected by the Ship Information Management System (SIMS) platform.

The era of big data and data science has already come, and so the world has begun to introduce various applications that utilize AI and machine learning for solving real-world issues. However, it is difficult to conduct research activities only in university laboratories, while using a huge amount of real data gathered every day. Fortunately, I was given an opportunity to participate in the MTI’s research project, which was very exciting as a researcher. At the same time, temptation to automate repetitive and labor-intensive process using machine learning is constantly pulling. Even if the path of adoption is slow and stony, machine learning is constantly moving from science to business processes, products, and real-world applications. While a lot of fancy algorithms has been developed, it is quite difficult to integrate them into existing processes and products because it takes time for industry to catch up.

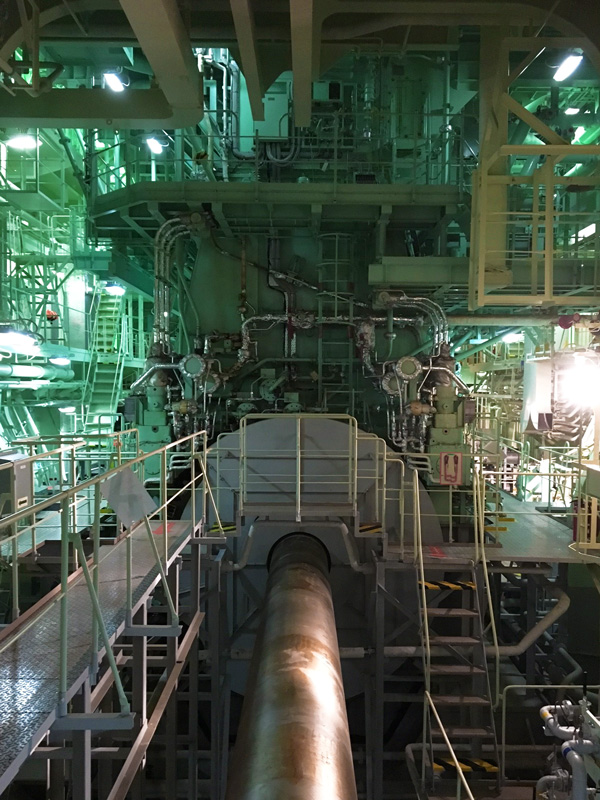

My first assignment was to detect engine abnormalities as early as possible, utilizing engine telemetry data of more than 100 vessels currently stored and maintained at the NYK’s cloud database. The engine room is a complex environment being monitored in shifts by experienced marine engineers. Due to various other duties, marine engineers were not always be able to monitor the performance of all systems simultaneously. Thus, there is a growing demand for maintenance assistance systems that could enable a system to give an alert when an important event has occurred.

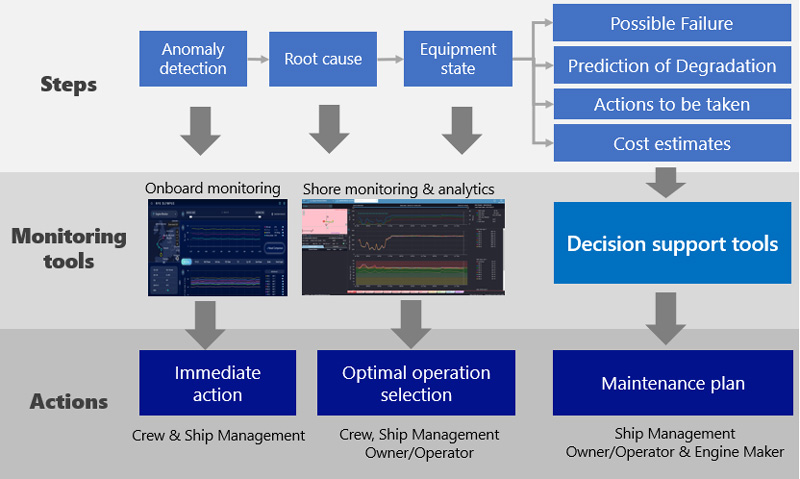

In that sense, sensor data collected from machinery systems on board ships provide real-time information of the condition of the ship and can be used to detect changes in the performance of the system in near real time, which may be indicative of an impending failure or even a fault that has begun. MTI has promoted solution developments utilizing machine learning, and allowing engineers, ship managers, and the NYK Maritime Group to take advantage of the power of big data in order to make reasonable decisions. My task is to create AI solutions that can automate the monitoring process of the streaming data and detect any deviations from a normal behavior with high accuracy and understandable for the marine engineers.

Importance of data quality

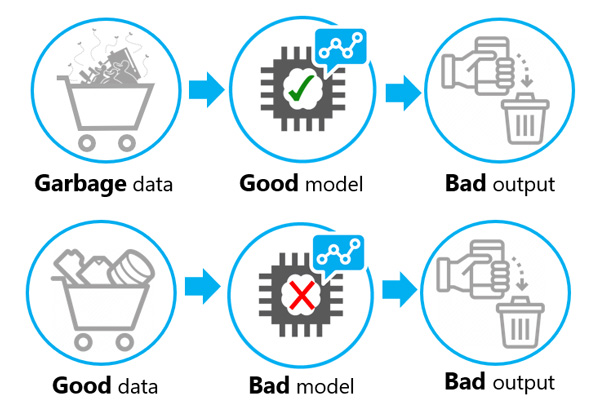

It is described as GIGO in the computer science world, but it is one of the “truths” of technology that inappropriate input data produces inappropriate output. This is especially true when it comes to dealing with big data. No matter how long period and how large amount of data is collected, the data is not structured as naturally like crystals. As for the data that I am working on in my research project, there are problems such as missing data, inaccurate and invalid values, the timeliness issue, and inconsistent records. Entering these inappropriate data into the analytical model will naturally yield only meaningless results. In the MTI’s research activities, under the NYK’s medium-term management plan Staying Ahead 2022 with Digitalization and Green, we can receive support to launch a project, which tries to apply the data quality management system conforming to the ISO planning to the utilization of the SIMS data. In addition, we can work with external institutions for working on quality control of the transmitted sensor data. It is interesting to be able to work on developing that kind of framework in the first place.

GIGO Principle in Machine Learning

Importance of domain knowledge & model selection

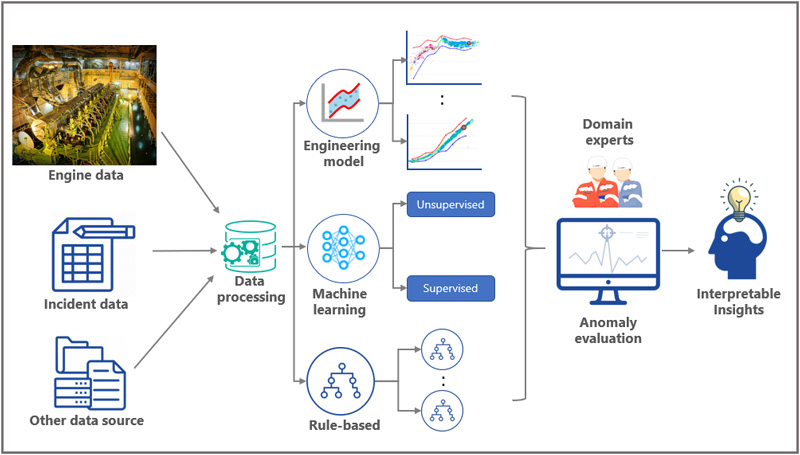

Even with the necessary and relevant data to perform an analysis, it can be difficult to determine which analytic models need to be considered in conjunction with engine expertise. This is because the task that I am dealing with is not a typical academic task in which the problem definition is clearly defined. Therefore, a research plan should be drafted, and explanations of possible benefits should be given iteratively. We are required to proactively present a use case in which the analysis results can be used for solving problems and convince stakeholders that the problem is worth solving. In doing so, it is necessary to seek advice from engine experts (engineers).

Furthermore, selecting an analytical model is a labor-intensive and time-consuming process, which consists of model selection, testing, validating, prototyping in a test environment, and building UI/UX for users. My background has helped me to scan hundreds of research papers to find analytical models that could solve the problem. At MTI, I also have opportunities to work in collaboration with external data scientists and research institutes to create entirely new analytical models and develop more sophisticated models. The machine learning models that are currently being verified an ensemble model consists of supervised models with training data labeled using a failure database, unsupervised models that learns sudden changes in the multidimensional space created by various sensor data and engineering model that learn the physics-related characteristics of the engine. This is an innovative ensemble learning model that combines the power of machine learning and presents analysis results with considering the physical phenomena of marine engine system.

Ensemble model incorporating machine learning and domain knowledge

It is also an important activity to work on small sub-themes within the large system of abnormality detection. One is the development of analytical models that automatically detect the operating modes of ships. Institutional data produces different sets of parameters, each with a different tendency depending on the operation mode. Therefore, it is necessary to be able to distinguish multidimensional profiles of such engine behaviors. This sub-theme is of particular interest to me because it is not only related to anomaly detection, but it can also be extended to many other areas, such as estimating the performance degradation of marine engines and developing autonomous ships.

As well as dealing with such a multi-faceted theme, we need to develop a working solution concept, which also needs to be streamlined within the existing IT infrastructure and the corporate culture. Fortunately, MTI and NYK have plenty of marine and IT experts, who advise me on overcoming that kind of dilemma. A wide variety of people with different specialties are involved in the project in MTI’s research activities for building solutions that are truly useful for users. It’s really fun to learn from such professionals and work on projects with fruitful discussions towards a common dream.

Paradox of big data and AI

I was really surprised at the unique range of expertise that can be absorbed, confronted with the unique system of ships and the many types of data that could be studied. While these could be studied in school, but at MTI, we learn directly from practitioners, with doing so, I have to conduct research activities with budgets and tight deadlines. From a researcher’s point of view, being able to handle a treasure trove of data that has a potential to produce various solutions but has not yet been fully exploited is exciting but challenging at the same time. For instance, I had a problem for performing calculations faster than the speed of the data received, while maintaining the accuracy of the output in almost real time.

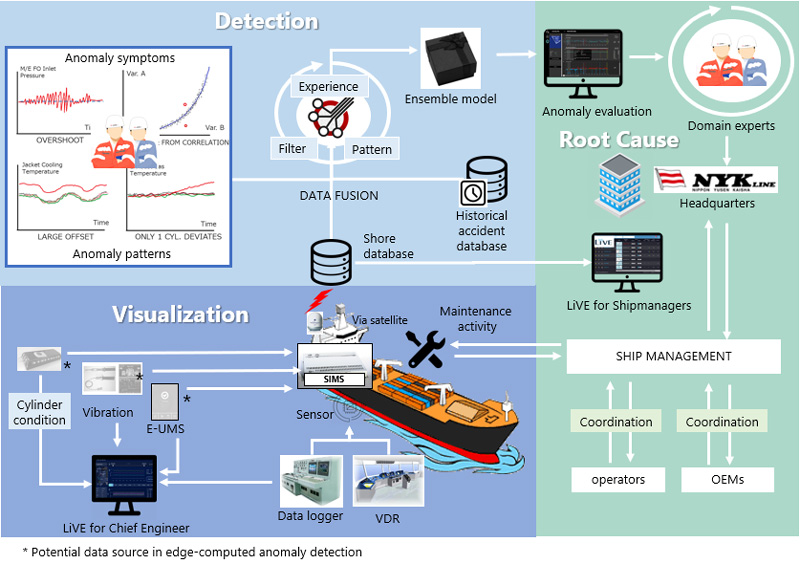

It has been a great benefit for my research activities such as developing LiVE applications*1 that NYK has jumped one step at a time: visualize the data first and watch/check the data on land to notify the malfunction of the ship’s engine while operating, rather than aiming to build a system that automatically generates anomaly detection alerts. Therefore, I was able to focus on automating the act of monitoring the state of the engine while watching the data currently visualized in LiVE. The point is, not all alerts have the same degree of importance. If the anomaly detection system can perform deeper analysis and issue the most meaningful alerts, users can contact engineers who are on board the operating ship or engine manufacturers, and thus users can consider the response with confidence. This shall enable all parties to focus on more important tasks such as notifying operators of the risk of freight delays and preparing for the maintenance at the most convenient time. The paradox, however, that the results of machine learning model analysis will not work, no matter how good the model is or how accurate the prediction is, unless it is interpretable by the user*2. AI must “persuade” the user, so that they can achieve their intended goal. Therefore, even at the R&D stage, close communication with real users is a top priority for me, and I believe that is a shortcut for establishing users’ trust.

Enabling automated systems that support enhancement of safety of operation

Aiming to improve predictive analytics with utilizing the hybrid model

From a standpoint of a shipping company, a shipbuilding engineer, or a big-data researcher, I feel that the distance between a ship and land is no longer a constraint that makes it difficult to operate a ship. If you have a virtually connected environment, you can work on issues in the same dimension. When AI and data science are leveraged, continuous monitoring of ships can be enabled; thus, we can find anomalies through various angles of analysis, translate them into information that users can understand, and encourage them to take appropriate actions.

If there is an error in an analysis of AI, the user should be able to provide feedback and let the AI learn from the error to improve the accuracy of abnormality detection, and vice versa: the learning algorithms can actively query the user/teacher to label an important piece of information that the algorithm has notified, and then it will learn from its mistakes. This framework is called active learning. If such system can be developed, a new environment can be created, where AI and humans can work closely together to strengthen each other. It doesn’t make sense unless the output from the system leads to meaningful actions. With that in mind, I would like to continue endless research at MTI in pursuit of the potential of AI and big data.

AI shall enable decision making support at various layers of operation

Finally, I think MTI, a company that conducts R&D activities with the full support of its parent company, NYK, is a very unique research institution. The use of AI to analyze the vast amount of accumulated ship data is at the core of NYK’s strategy, and as the analysis proceeds, it will be possible to predict dangers, failures, and obstacles during operations with the main goal of safety in ship operations. Not only I was able to get involved in MTI’s research activities and was given the opportunity to address immediate or future challenges, but also I was given an opportunity to expand my network with external research institutions around the world, which have broadened my insights.

During a workshop with DNV GL (Second from the left on the bottom row: Mr. Hangga)

<References>

*1 The NYK Group’s digitalization activities: https://www.nyk.com/english/csr/technology/example/

*2 Smith, P.J, Hoffman, R.R (2017). Cognitive Systems Engineering: The Future for a Changing World, CRC Press, ISBN 9781472430496